Probability & the words we use: why it matters

The medieval game of Hazard

So difficult it is to show the various meanings and imperfections of words when we have nothing else but words to do it with. -John Locke

A well-studied phenomenon is that perceptions of probability vary greatly between people. You and I perceive the statement “high risk of an earthquake” quite differently. There are so many factors that influence this disconnect: one’s risk tolerance, events that happened earlier that day, cultural and language considerations, background, education, and much more. Words sometimes mean a lot, and other times, convey nothing at all. This is the struggle of any risk analyst when they communicate probabilities, forecasts, or analysis results.

Differences in perception can significantly impact decision making. Some groups of people have overcome this and think and communicate probabilistically – meteorologists and bookies come to mind, but other areas such as business, lag far behind. My position has always been that if business leaders can start to think probabilistically, like bookies, significantly better risk decisions can be made, yielding an advantage over their competitors. I know from experience, however, that I need to first convince you there’s a problem.

The Roulette Wheel

A pre-COVID trip to Vegas reminded me of the simplicity in betting games and their usefulness in explaining probabilities. Early probability theory was developed to win at dice games, like hazard – a precursor to craps – not to advance the field of math.

Imagine this scenario: we walk into a Las Vegas casino together and I place $2,000 on black on the roulette wheel. I ask you, “What are my chances of winning?” How would you respond? It may be one of the following:

You have a good chance of winning

You are not likely to win

That’s a very risky bet, but it could go either way

Your probability of winning is 47.4%

Which answer above is most useful when placing a bet? The last one, right? But, which answer is the one you are most likely to hear? Maybe one of the first three?

All of the above could be typical answers to such a question, but the first three reflect attitudes and personal risk tolerance, while the last answer is a numerical representation of probability. The last one is the only one that should be used for decision making; however, the first three examples are how humans talk.

I don’t want us all walking around like C3PO, quoting precise odds of successfully navigating an asteroid field at every turn, but consider this: not only is “a good chance of winning” not helpful, you and I probably have a different idea of what “good chance” means!

The Board Meeting

Let’s move from Vegas to our quarterly Board meeting. I’ve been in many situations where metaphors are used to describe probabilities and then used to make critical business decisions. A few recent examples that come to mind:

We’ll probably miss our sales target this quarter.

There’s a snowball’s chance in hell COVID infection rates will drop.

There’s a high likelihood of a data breach on the customer database.

Descriptors like the ones above are the de facto language of forecasting in business: they’re easy to communicate, simple to understand, and do not require a grasp of probability – which most people struggle with. There’s a problem, however. Research shows that our perceptions of probability vary widely from person to person. Perceptions of “very likely” events are influenced by many factors, such as gender, age, cultural background, and experience. Perceptions are further influenced by the time of day the person is asked to make the judgment, a number you might have heard recently that the mind anchors to, or confirmation bias (a tendency to pick evidence that confirms our own beliefs).

In short, when you report “There’s a high likelihood of a data breach on the customer database” each Board member interprets “high likelihood” in their own way and makes decisions based on the conclusion. Any consensus about how and when to respond is an illusion. People think they’re on the same page, but they are not. The CIA and the DoD noticed this problem in the 1960’s and 1970’s and set out to study it.

The CIA’s problem

One of the first papers to tackle this work is a 1964 CIA paper, Words of Estimative Probability by Sherman Kent. It’s now declassified and a fascinating read. Kent takes the reader through how problems arise in military intelligence when ambiguous phrases are used to communicate future events. For example, Kent describes a briefing from an aerial reconnaissance mission.

Aerial reconnaissance of an airfield

Analysts stated:

“It is almost certainly a military airfield.”

“The terrain is such that the [redacted] could easily lengthen the runways, otherwise improve the facilities, and incorporate this field into their system of strategic staging bases. It is possible that they will.”

“It would be logical for them to do this and sooner or later they probably will.”

Kent describes how difficult it is to interpret these statements meaningfully; not to mention, make strategic military decisions.

The next significant body of work on this subject is “Handbook for Decision Analysis” by Scott Barclay et al for the Department of Defense. A now-famous 1977 study was conducted on 23 NATO officers, asking them to match probabilities, articulated in percentages, to probability statements. The officers were given a series of 16 statements, including:

It is highly likely that the Soviets will invade Czechoslovakia.

It is almost certain that the Soviets will invade Czechoslovakia.

We believe that the Soviets will invade Czechoslovakia.

We doubt that the Soviets will invade Czechoslovakia.

Only the probabilistic words in bold (emphasis added) were changed across the 16 statements. The results may be surprising:

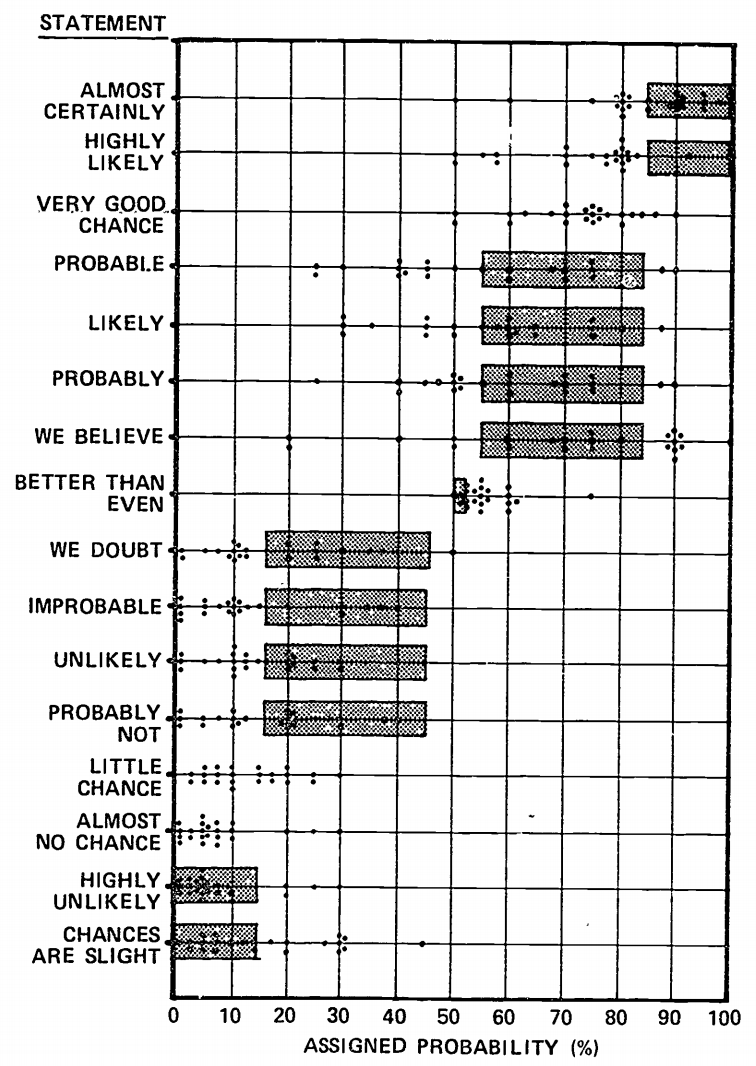

“Handbook for Decisions Analysis” by Scott Barclay et al for the Department of Defense, 1977

It is obvious that the officers’ perceptions of probabilities are all over the place. For example, there’s an overlap with “we doubt” and “probably,” and the inconsistencies don’t stop there. The most remarkable thing is that this phenomenon isn’t limited to 23 NATO officers – take any group of people, ask them the same questions, and you will see very similar results.

Can you imagine trying to plan for the Soviet invasion of Czechoslovakia, literal life and death decisions, and having this issue? Let’s suppose intelligence states there’s a “very good chance” of an invasion occurring. One officer thinks “very good chance” feels about 50/50 – a coin flip. Another thinks that’s a 90% chance. They both nod in agreement and continue war planning!

Can I duplicate the experiment?

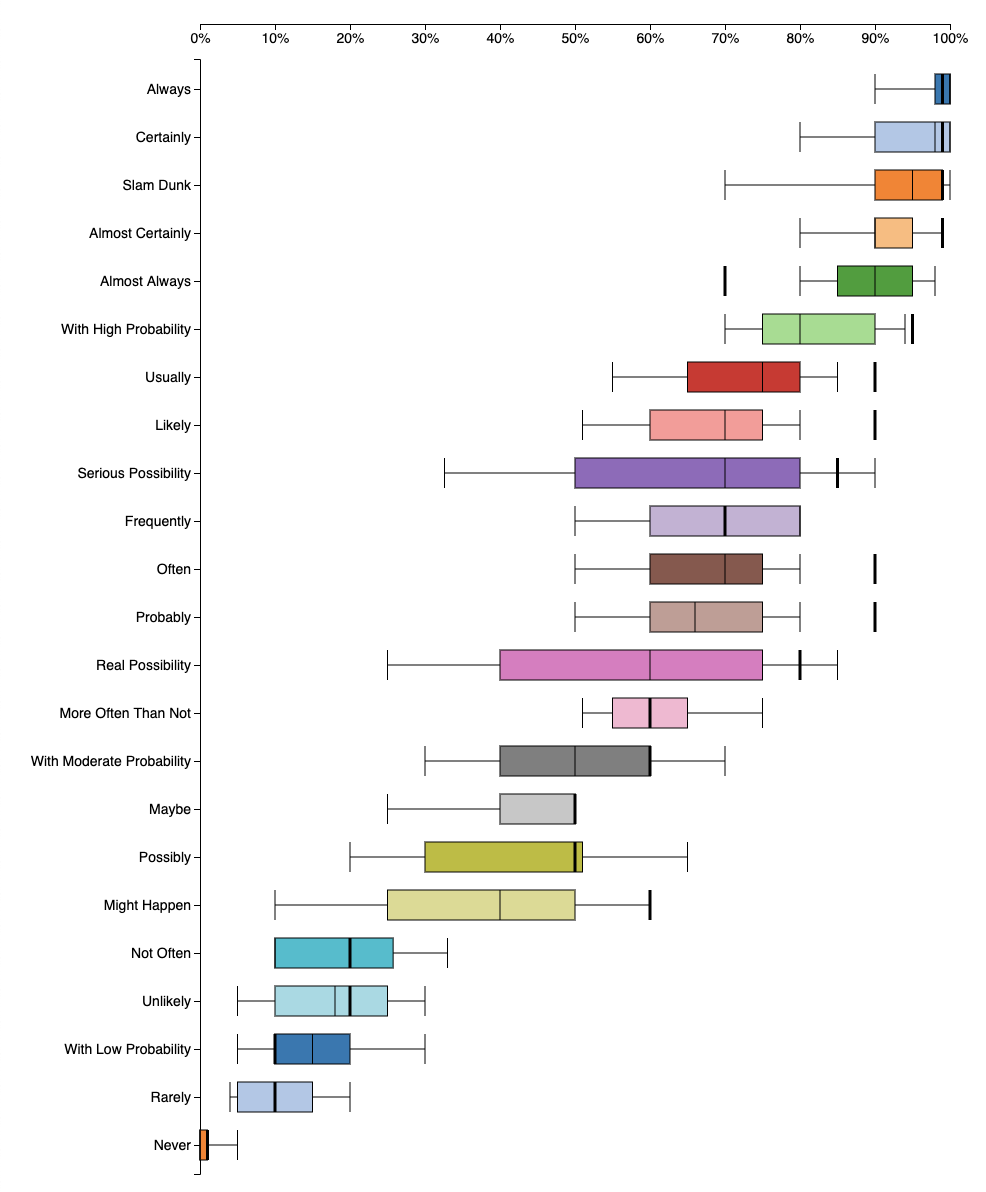

I recently discovered a massive, crowdsourced version of the NATO officer survey called www.probabilitysurvey.com. The website collects perceptions of probabilistic statements, then shows an aggregated view of all responses. I took the survey to see if I agreed with the majority of participants, or if I was way off base.

My perceptions of probabilities (from www.probabilitysurvey.com). The thick black bars are my answers

I was surprised that some of my responses were so different than others, yet others were in line with everyone else. I work with probabilities every day and work with people to translate what they think is possible, and probable, to probabilistic statements. Thinking back, I consider many terms in the survey as synonymous with each other, while others perceive slight variations.

This is even more proof that if you and I are in a meeting, talking about high likelihood events, we will have different notions of what that means, leading to mismatched expectations and inconsistent outcomes. This can destroy the integrity of a risk analysis.

What can we do?

We can’t really “fix” this, per se. It’s a condition, not a problem. It’s like saying, “We need to fix the problem that everyone has a different idea of what ‘driving fast’ means.” We need to recognize that perceptions vary among people and adjust our own expectations accordingly. As risk analysts, we need to be intellectually honest when we present risk forecasts to business leaders. When we walk into a room and say “ransomware is a high likelihood event,” we know that every single person in the room hears “high” differently. One may think it’s right around the corner and someone else may that’s a once-every-ten-years event and have plenty of time to mitigate.

That’s the first step. Honesty.

Next, start thinking like a bookie. Experiment with using mathematical probabilities to communicate future events in any decision, risk, or forecast. Get to know people and their backgrounds; try out different techniques with different people. For example, someone who took meteorology classes in college might prefer probabilities and someone well-versed in gambling might prefer odds. Factor Analysis of Information Risk (FAIR), an information risk framework, uses frequencies because it’s nearly universally understood.

For example,

“There’s a low likelihood of our project running over budget.”

Becomes…

There’s a 10% chance of our project running over budget.

Projects like this one, in the long run, will run over budget about once every 10 years.

Take the quiz yourself on www.probabilitysurvey.com. Pass it around the office and compare results. Keep in mind there is no right answer; everyone perceives probabilistic language differently. If people are sufficiently surprised, test out using numbers instead of words.

Numbers are unambiguous and lead to clear objectives, with measurable results. Numbers need to become the new de facto language of probabilities in business. Companies that are able to forecast and assess risk using numbers instead of soft, qualitative adjectives, will have a true competitive advantage.

Resources

Words of Estimative Probability by Sherman Kent

Handbook for Decisions Analysis by Scott Barclay et al for the Department of Defense

Take your own probability survey

Thinking Fast and Slow by Daniel Kahneman | a deep exploration into this area and much, much more

*** This is a Security Bloggers Network syndicated blog from Blog - Tony Martin-Vegue authored by Tony MartinVegue. Read the original post at: https://www.tonym-v.com/blog/2020/08/09/predictive