Researchers reveal Light Commands: laser-based audio injection attacks on voice-control devices like Alexa, Siri and Google Assistant

Researchers from the University of Electro-Communications in Tokyo and the University of Michigan released a paper on Monday, that gives alarming cues about the security of voice-control devices. In the research paper the researchers presented ways in which they were able to manipulate Siri, Alexa, and other devices using “Light Commands”, a vulnerability in in MEMS (microelectro-mechanical systems) microphones.

Light Commands was discovered this year in May. It allows attackers to remotely inject inaudible and invisible commands into voice assistants, such as Google assistant, Amazon Alexa, Facebook Portal, and Apple Siri using light. This vulnerability can become more dangerous as voice-control devices gain more popularity.

How Light Commands work

Consumers use voice-control devices for many applications, for example to unlock doors, make online purchases, and more with simple voice commands. The research team tested a handful of such devices, and found that Light Commands can work on any smart speaker or phone that uses MEMS. These systems contain tiny components that convert audio signals into electrical signals. By shining a laser through the window at microphones inside smart speakers, tablets, or phones, a far away attacker can remotely send inaudible and potentially invisible commands which are then acted upon by Alexa, Portal, Google assistant or Siri.

Many users do not enable voice authentication or passwords to protect devices from unauthorized use. Hence, an attacker can use light-injected voice commands to unlock the victim’s smart-lock protected home doors, or even locate, unlock and start various vehicles.

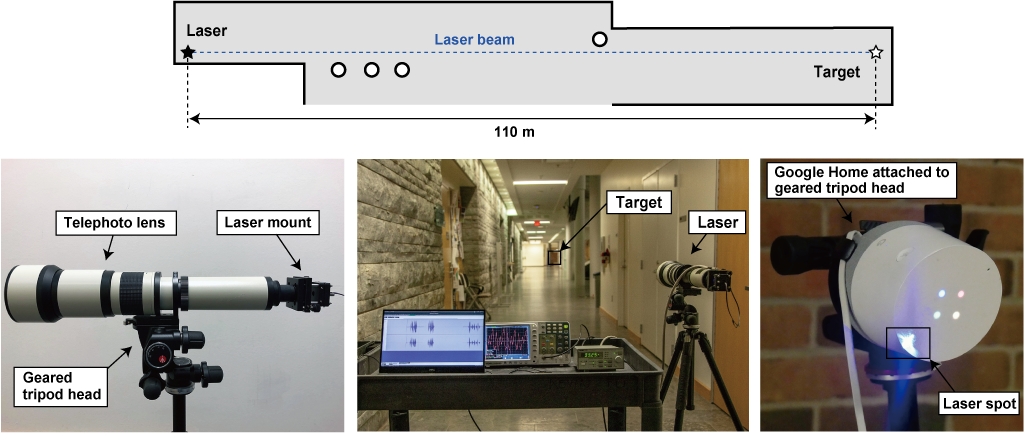

Further researchers also mentioned that Light Commands can be executed at long distances as well. To prove this they demonstrated the attack in a 110 meter hallway, the longest hallway available in the research phase. Below is the reference image where team demonstrates the attack, additionally they have captured few videos of the demonstration as well.

Source: Light Commands research paper. Experimental setup for exploring attack range at the 110 m long corridor

The Light Commands attack can be executed using a simple laser pointer, a laser driver, and a sound amplifier. A telephoto lens can be used to focus the laser for long range attacks.

Detecting the Light Commands attacks

Researchers also wrote how one can detect if the devices are attacked by Light Commands. They believe that command injection via light makes no sound, an attentive user can notice the attacker’s light beam reflected on the target device. Alternatively, one can attempt to monitor the device’s verbal response and light pattern changes, both of which serve as command confirmation.

Additionally they also mention that so far they have not seen any such cases where the Light Command attack has been maliciously exploited.

Limitations in executing the attack

Light Commands do have some limitations in execution:

- Lasers must point directly at a specific component within the microphone to transmit audio information. Attackers need a direct line of sight and a clear pathway for lasers to travel.

- Most light signals are visible to the naked eye and would expose attackers. Also, voice-control devices respond out loud when activated, which could alert nearby people of foul play.

- Controlling advanced lasers with precision requires a certain degree of experience and equipment. There is a high barrier to entry when it comes to long-range attacks.

How to mitigate such attacks

Researchers in the paper suggested to add an additional layer of authentication in voice assistants to mitigate the attack. They also suggest that manufacturers can attempt to use sensor fusion techniques, such as acquiring audio from multiple microphones. When the attacker uses a single laser, only a single microphone receives a signal while the others receive nothing. Thus, manufacturers can attempt to detect such anomalies, ignoring the injected commands.

Another approach proposed is reducing the amount of light reaching the microphone’s diaphragm. This can be possible by using a barrier that physically blocks straight light beams to eliminate the line of sight to the diaphragm, or by implementing a non-transparent cover on top of the microphone hole to reduce the amount of light hitting the microphone.

However, researchers also agreed that such physical barriers are only effective to a certain point, as an attacker can always increase the laser power in an attempt to pass through the barriers and create a new light path.

Users discuss photoacoustic effect at play

On Hacker News, this research has gained much attention as users find this interesting and applaud researchers for the demonstration. Some discuss the laser pointers and laser drivers price and features available to hack the voice assistants.

Others discuss how such techniques come to play, one of them says, “I think the photoacoustic effect is at play here. Discovered by Alexander Graham Bell has a variety of applications. It can be used to detect trace gases in gas mixtures at the parts-per-trillion level among other things. An optical beam chopped at an audio frequency goes through a gas cell. If it is absorbed, there’s a pressure wave at the chopping frequency proportional to the absorption. If not, there isn’t. Synchronous detection (e.g. lock in amplifiers) knock out any signal not at the chopping frequency. You can see even tiny signals when there is no background. Hearing aid microphones make excellent and inexpensive detectors so I think that the mics in modern phones would be comparable.

Contrast this with standard methods where one passes a light beam through a cell into a detector, looking for a small change in a large signal.

https://chem.libretexts.org/Bookshelves/Physical_and_Theoret…

Hats off to the Michigan team for this very clever (and unnerving) demonstration.”

Read Next

How Chaos Engineering can help predict and prevent cyber-attacks preemptively

An unpatched security issue in the Kubernetes API is vulnerable to a “billion laughs” attack

Intel’s DDIO and RDMA enabled microprocessors vulnerable to new NetCAT attack

Wikipedia hit by massive DDoS (Distributed Denial of Service) attack; goes offline in many countries

*** This is a Security Bloggers Network syndicated blog from Security News – Packt Hub authored by Fatema Patrawala. Read the original post at: https://hub.packtpub.com/researchers-reveal-light-commands-laser-based-audio-injection-attacks-on-voice-control-devices-like-alexa-siri-and-google-assistant/