Home » Security Bloggers Network » THE FORTHCOMING 2021 OWASP TOP TEN SHOWS THAT THREAT MODELING IS NO LONGER OPTIONAL

THE FORTHCOMING 2021 OWASP TOP TEN SHOWS THAT THREAT MODELING IS NO LONGER OPTIONAL

In 2003, two years after the organization was founded, the Open Web Application Security Project (OWASP) published the first OWASP Top Ten—an attempt to raise awareness about the biggest application security risks that organizations face.

Since then, updates to the Top Ten have been released once every three years or so to account for changes in the threat landscape and the increasing complexity of applications and methodologies for developing them. As most readers of this blog post know, the OWASP Top Ten has become one of the primary tools used around the world to help organizations prioritize their application security efforts. Even though this was not OWASP’s intention, the Top Ten is so respected that it is now something of a compliance checkbox at many organizations.

A NEW OWASP TOP TEN

As I write this post, the current version of the OWASP Top Ten is four years old. The latest update was delayed somewhat by the COVID-19 pandemic and by the complexity of the research they were doing. But OWASP has now finished its research and a beta version of the new Top Ten is posted—along with a lot of supporting materials—so that interested parties can provide feedback before the list is finalized.

The draft of the 2021 OWASP Top Ten is still in review, and I am sure that there will be a few changes before the final release on September 24. Just as Contrast Security has done in the past, we submitted feedback on a number of details in the draft. We provided a lot of highly accurate data for the project, and I commend OWASP for a job well done.

Recognizing that organizations find our research and insights valuable, we scheduled a moderated webinar (“OWASP Co-founders Discuss the 2021 OWASP Top Ten”) on September 24 at 10 AM PDT | 1 PM EDT with Dave Wichers, who co-founded OWASP with me 20 years ago, during which we will discuss additions and changes to the final version of the Top Ten. We will also publish additional blog posts leading up to the final release of the Top Ten.

For this blog post, I offer some preliminary thoughts on how existing categories have changed, explain the three new categories introduced this year, and give a few tips on how everyone can prepare. As the title indicates, I think threat modeling must be an integral part of compliance with the 2021 OWASP Top Ten.

THE RESEARCH: AN UNPRECEDENTED DATA SCIENCE ACHIEVEMENT

I want to say again that OWASP did an outstanding job with the research behind the new Top Ten. It is a massive study of more than 500,000 applications using telemetry data provided by 13 application security vendors that included Contrast. This is much bigger than the 2017 study in terms of both vendors and applications, but well worth it. There is really no other way to get good insight into what is going on in the world of application security, and OWASP is the best entity to do it.

I want to make a couple observations about the dataset. First, since it relies on vendors for the data it analyzes, OWASP’s approach necessarily sorts for applications that organizations are willing to pay to protect. As a result, this is a study of the most important applications out there, at organizations in all industries and of all sizes. This is a good thing, because nonconsequential applications do not provide distractions or noise in the results.

On the negative side, OWASP has no way to account for false positives in the dataset. In a cross-industry and cross-persona survey by Contrast Labs earlier this year, 80% of respondents reported that at least half of alerts generated by their scanning tools are false positives, and 38% put that ratio above three-quarters. With basically the entire application security industry providing data for the research, a similar percentage of the vulnerabilities identified in the data likely poses no risk.

Of course, another approach would be to do a smaller study using data from more accurate tools—something we did with the 2021 Application Security Observability Report. If you read this report alongside the OWASP draft, you will see that much of OWASP’s data tracks with ours (e.g., both studies saw broken access control moving up to number one), but there are also notable differences.

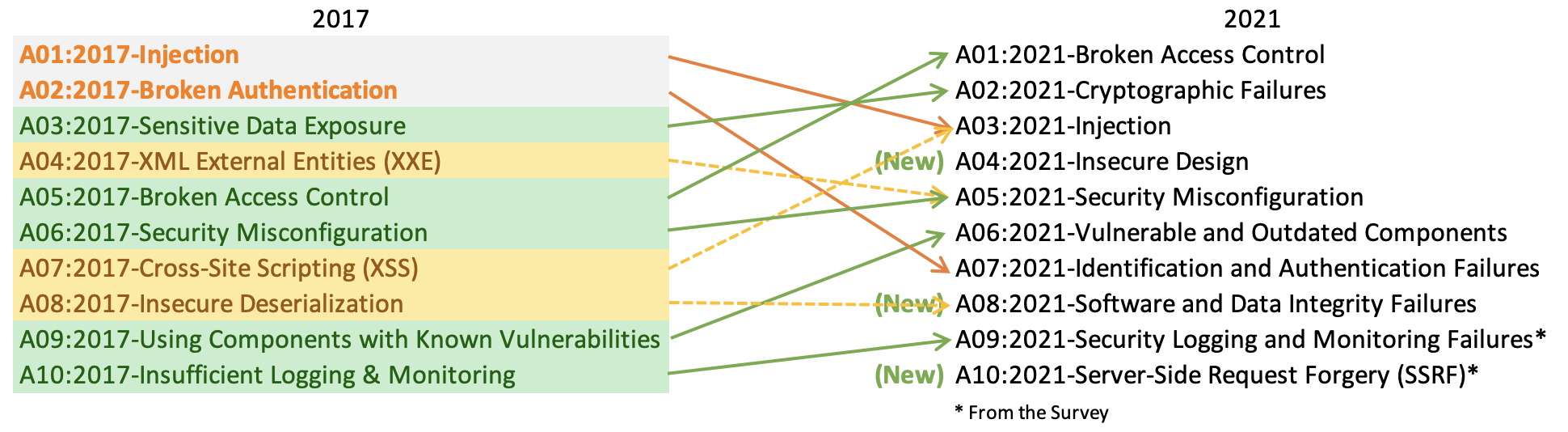

OWASP 2021 Top Ten pre-release draft

CATEGORIES REARRANGED AND RENAMED: FITTING INTO 10 BULLETS

As you can see in the above graphic, quite a few of the 2017 Top Ten were renamed or combined with other categories for 2021. To be honest, I blame David Letterman. Specifically, there is not a fundamental reason why we should have 10 categories, but we needed a set number. OWASP aims for the Top Ten list to represent a comprehensive list of the major application security risks that we face today, and doing so inevitably “forces” some odd category groupings and bundling.

One way to ensure the Top Ten is as comprehensive as possible is to combine multiple risks into individual categories. For example, the 2017 OWASP Top Ten combined all injection vulnerabilities into one category that includes SQL, Command, Expression Language (EL), and LDAP Injection. For the 2021 Top Ten, Cross-Site Scripting (XSS) was added to the Injection category. This move is not illogical. In this vein, awareness that there are subcategories to many of the Top Ten that require different kinds of response will be even more important when the final 2021 version is released.

In other combinations, XML External Entities (XXE) were subsumed into the Security Misconfiguration category. This makes some sense in that XXE vulnerabilities happen when XML parsers are not configured to ignore doctype headers, but strange because this “configuration” is most often done in the software as a part of creating the parser. On a different note, Broken Authentication was expanded to include vulnerabilities related to identification failures; hence, the renaming to Identification and Authentication Failures.

Speaking of open-source libraries, Using Components with Known Vulnerabilities was expanded beyond vulnerable libraries to include out-of-date libraries. The result is a newly formed Vulnerable and Outdated Components category. Inactive libraries and library classes that are not active—that is, are never invoked by the application—are minimal risks whether they have a vulnerability or not. Our 2021 Observability Report found that only 6% of code in the typical application is active library code, 20% is active custom code, and the remaining while 74% is inactive. Here it is important to note that outdated libraries only pose risk if they are both “active” and “vulnerable.” Thus, keeping libraries up to date is good hygiene and does make it easier to keep components secure.

NEW OWASP CATEGORIES: FORWARD-LOOKING DESCRIPTIONS OF RISK

In addition to quantifying the relative risk of existing vulnerability categories, OWASP aspires to anticipate what will happen in the future by creating two or three forward-looking categories. In the 2021 Top Ten, there are three new entries, and their final definitions are still evolving. Notwithstanding, they do a good job of reflecting emerging trends in threats against applications.

Insecure Design debuts at the fourth position in the Top Ten. The definition is not super crisp at the moment, but it will be tightened over time. This category reflects the awareness that the underlying architecture of an application has a big impact on how secure it is—something I have been saying for more than a decade. Organizations should do threat modeling and create security architectures whenever architectures are created or changed. Many organizations talk about “shifting left,” but usually they usually don’t mean shifting this far left. The implication is that application security does not start until coding begins. In contrast, this new category encourages development teams to take time to design new applications with stronger, simpler, and more concise architectures.

Software and Data Integrity Failures comes in at number eight. The most well-known recent example of a software integrity failure is the massive SolarWinds breach revealed last December. Attackers compromised the build pipeline and inserted malware into a scheduled software update, and the integrity of the software was compromised as a result. Personally, I think including Integrity and Unsafe Deserialization in this category is an unnecessary stretch. Rather, this risk should focus entirely on protecting the integrity of software across the software development life cycle (SDLC), from the integrated development environment (IDE) through production.

The third new category, appearing in the 10th position, is Server-Side Request Forgery (SSRF)—something that has generated some attention in the press and shows up in our data all over the place. As OWASP explains, “SSRF flaws occur whenever a web application is fetching a remote resource without validating the user-supplied URL. It allows an attacker to coerce the application to send a crafted request to an unexpected destination, even when protected by a firewall, VPN, or another type of network ACL.”

GETTING READY: COVERING THE NEW OWASP TOP TEN CATEGORIES

You may be wondering how you and your organization can prepare for the new matrix. The first step is to start thinking about the three new categories, which legitimately reflect emerging threats that we all need to be concerned about. Start collecting data about these categories.

If you are unable to generate such data, now is the time to invest in the Contrast Application Security Platform. It automatically generates architecture diagrams based on what the running application does, which will help with the Insecure Design category. We also offer a variety of features to help protect the software supply chain, including the new features we recently introduced to reduce exposure to Dependency Confusion risk. The platform’s rule set detects all the vulnerabilities in the Top Ten—including the SSRF category, which is tricky to do accurately. Additionally, Contrast prevents OWASP Top Ten vulnerabilities from being exploited in production. This enables organizations to fix code, update libraries, and improve processes without exposure and fire drills. Finally, Contrast’s reporting capabilities make it easy for security and development teams to keep management, board members, and investors apprised of an organization’s compliance with the OWASP Top Ten.

TAKEAWAYS: THREAT MODELING AND OBSERVABILITY ARE NO LONGER OPTIONAL

The bottom line emerging from the upcoming 2021 OWASP Top Ten is that application threat modeling is no longer an option. OWASP, the National Institute of Standards & Technology (NIST), and the Payment Card Institute (PCI) all added threat modeling to their standards. And while every organization should have deployed threat monitoring some time ago, the reality is that many have not. It is time to change this situation. Indeed, the recent White House executive order said the same thing, and every company that does business with the federal government will soon be required to have threat modeling in place.

To deliver effective threat modeling, application security must embed accurate rules for all the categories and subcategories of the 2021 Top Ten—including the new ones. Comprehensive reporting capabilities customized to the OWASP Top Ten are no longer optional. Finally, it is critical that organizations have full observability into every aspect of application security across the SDLC—from the earliest stages of development to production. If you are not already a Contrast customer, I encourage you to explore how Contrast can help you cover the latest OWASP Top Ten. And make sure to register to attend our upcoming webinar on September 24 during which Dave Wichers and I will provide insights on the 2021 Top Ten updates.

MORE RESOURCES

2021 OWASP TOP TEN PRE-RELEASE DRAFT: HERE

WEBINAR: OWASP CO-FOUNDERS DISCUSS THE 2021 OWASP TOP TEN: HERE

*** This is a Security Bloggers Network syndicated blog from AppSec Observer authored by Jeff Williams. Read the original post at: https://www.contrastsecurity.com/security-influencers/the-forthcoming-2021-owasp-top-ten-shows-that-threat-modeling-is-no-longer-optional