Risk Mythbusters: We need actuarial tables to quantify cyber risk

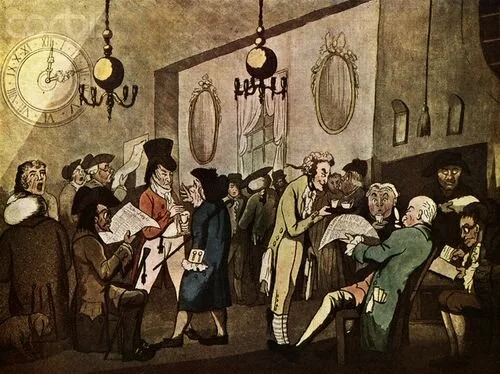

Risk management pioneers: The New Lloyd’s Coffee House, Pope’s Head Alley, London

The auditor stared blankly at me, waiting for me to finish speaking. Sensing a pause, he declared, “Well, actually, it’s not possible to quantify cyber risk. You don’t have cyber actuarial tables.” If I had a dollar for every time I heard that… you know how the rest goes.

There are many myths about cyber risk quantification that have become so common, they border on urban legend. The idea that we need vast and near-perfect historical data is a compelling and persistent argument, enough to discourage all the but the most determined of risk analysts. Here’s the flaw in that argument: actuarial science is a varied and vast discipline, selling insurance on everything from automobile accidents to alien abduction – many of which do not have actuarial tables or even historical data. Waiting for “perfect” historical data is a fruitless exercise and will prevent the analyst from using the data at hand, no matter how sparse or flawed, to drive better decisions.

Insurance without actuarial tables

Many contemporary insurance products, such as car, house, fire, and life have rich historical data today. However, many insurance products have for decades – in some cases, centuries – been issued without historical data, actuarial tables, or even good information. For those still incredulous, consider the following examples:

Auto insurance: Issuing auto insurance was unheard of when the first policy was issued in 1898. Companies only insured horse-drawn carriages up to that point, and actuaries used data from other types of insurance to set a price.

Celebrities’ body parts: Policies on Keith Richards’ hands and David Beckham’s legs are excellent tabloid fodder, but also a great example of how actuaries are able to price rare events.

First few years of cyber insurance: Claims data was sparse in the 1970’s, when this product was first conceived, but there was money to be made. Insurance companies set initial prices based on estimates and adjacent data. Prices were adjusted as claims data became available.

There are many more examples: bioterrorism, capital models, and reputation insurance to name a few.

How do actuaries do it?

Many professions, from cyber risk to oil and gas exploration, use the same estimation methods developed by actuaries hundreds of years ago. Find as much relevant historical data as possible – this can be adjacent data, such as the number of horse-drawn carriage crashes when setting a price for the first automobile policy – and bring it to the experts. Experts then apply reasoning, judgment, and their own experience to set insurance prices or estimate the probability of a data breach.

Subjective data encoded quantitatively isn’t bad! On the contrary, it’s very useful when there is deep uncertainty, sparse data, data is expensive to acquire or a new, emerging risk.

I’m always a little surprised when people reject better methods altogether, citing the lack of “perfect data,” then swing in the opposite direction to gut checks and wet finger estimation. The tools and techniques are out there to make cyber risk quantification not only possible but could give any company a competitive edge. Entire industries have been built around less than perfect data and we as cyber risk professionals should not use a lack of perfect data as an excuse not to quantify cyber risk. If there is a value placed on Tom Jones’ chest hair then certainly we can predict the loss risk of a data incident… go ask the actuaries!

*** This is a Security Bloggers Network syndicated blog from Blog - Tony Martin-Vegue authored by Tony MartinVegue. Read the original post at: https://www.tonym-v.com/blog/2020/10/20/risk-mythbusters-we-need-actuarial-tables-to-quantify-cyber-risk